The IDE will provide some project-specific context, such as the languages and technologies used in your project. Use the AI Assistant tool window to have a conversation with the LLM, ask questions, or iterate on a task. The current EAP build provides a sample of features that indicates the direction we’re moving in: AI chat For local models, the supported feature set will most likely be limited. We also plan to support local and on-premises models. In the future, we plan to extend this to more providers, giving our users access to the best options and models available.

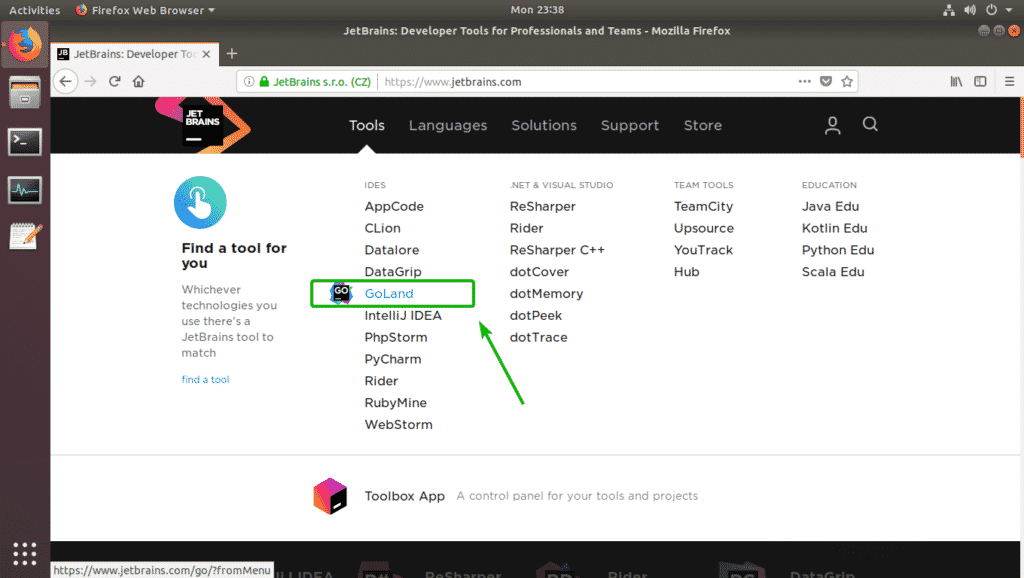

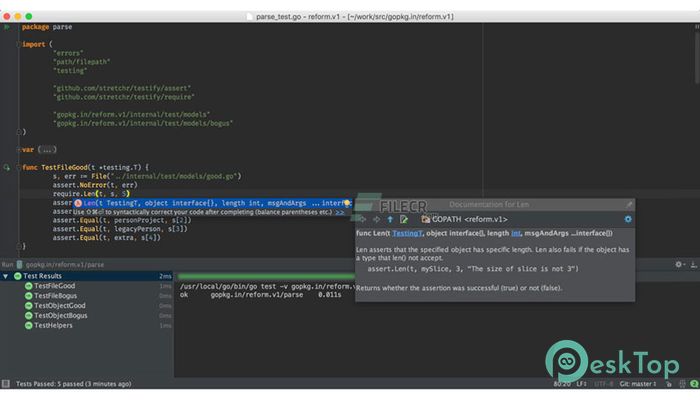

At launch, the service supports OpenAI and additionally hosts a number of smaller models created by JetBrains. The service transparently connects you, as a product user, to different large language models (LLMs) and enables specific AI-powered features inside many JetBrains products. The AI features are powered by the JetBrains AI service. Building a deep integration of AI features with the code understanding, which has always been a strong suit of JetBrains IDEs.Weaving the AI assistance into the core IDE user workflows.Our approach to building the AI Assistant feature focuses on two main aspects: Generative AI and large language models are rapidly transforming the landscape of software development tools, and the decision to integrate this technology into our products was a no-brainer for us. This blog post focuses on our IntelliJ-based IDEs with a dedicated. NET tools include a major new feature: AI Assistant. This week’s EAP builds of all IntelliJ-based IDEs and.

Please note that AI Assistant access may currently be limited by a waitlist. It can be installed as a separate plugin available for versions 2023.2.x. Update, July 13: AI Assistant is available in pre-release versions, but is not bundled with the stable releases of JetBrains IDEs v.2023.2.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed